Understanding Caching in TangleML

TangleML's caching system is one of its most powerful features, designed to dramatically reduce compute time and accelerate your ML pipeline iterations. Unlike traditional pipeline systems, TangleML implements sophisticated caching strategies that can save hours or even days of computation time.

Why caching matters

Imagine you have a pipeline that runs for two days, involving data preprocessing, model training, and evaluation. Without caching:

- Every pipeline run would start from scratch

- Small changes would require complete re-execution

- Parallel experiments would duplicate work unnecessarily

With TangleML's caching:

- Previous computations are automatically reused

- Only changed components are re-executed

- Multiple pipeline variants share common computations

A pipeline that took a full day to run initially might complete in minutes on subsequent runs if most components can use cached results!

How caching works

The cache key

TangleML generates a unique cache key for each task execution based on:

- Container specification - The exact container image, commands, and environment

- Input data hashes - Cryptographic hashes of all input artifacts

When a task is about to execute, TangleML checks if an identical task has been run before by comparing cache keys.

Content-based vs. lineage-based hashing

TangleML uses content-based hashing, which is superior to the lineage-based approach used by most other systems:

| Feature | Content-Based (TangleML) | Lineage-Based (Others) |

|---|---|---|

| Component upgrades | Downstream uses cache if outputs are identical | Must re-execute all downstream |

| Non-deterministic components | Downstream uses cache when output doesn't change | Always re-executes downstream |

| Constant value replacement | Can swap hardcoded values with components without breaking cache | Cache breaks when lineage changes |

Content-based hashing means that if a component produces the same output (same hash), all downstream components can still use their cached results, even if the upstream component was re-executed!

Reusing running executions

One of TangleML's unique features is the ability to reuse still-running executions. This is crucial for parallel pipeline submissions:

Pipeline 1: [Data processing] → [Training A] → [Evaluation]

Pipeline 2: [Data processing] → [Training B] → [Evaluation]

Pipeline 3: [Data processing] → [Training C] → [Evaluation]

When submitted simultaneously:

- Other systems: Launch 3 separate data processing tasks

- TangleML: All pipelines share the same data processing execution

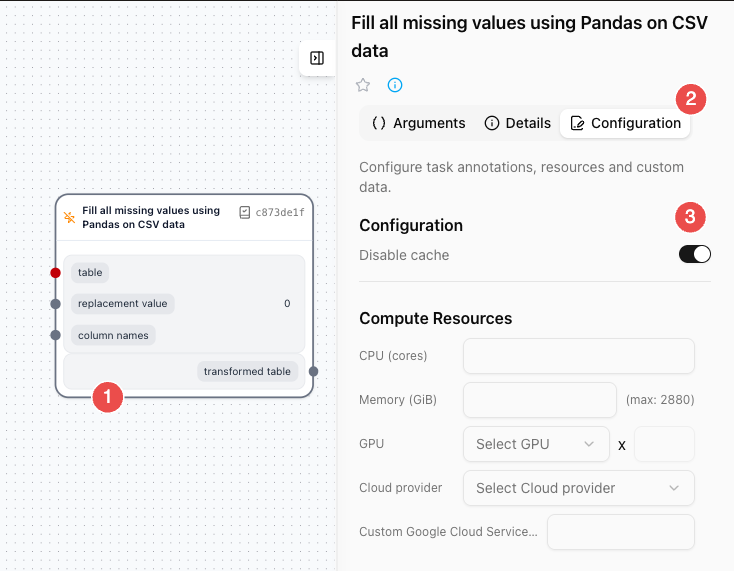

Cache configuration

Controlling cache behavior

You can control caching behavior at the component task level:

| Setting | Description | Example Use Case |

|---|---|---|

| Default | Cache enabled, no staleness limit | Most components |

| Disabled | max_cache_staleness: "P0D" | Components reading volatile external data |

| Time-limited | max_cache_staleness: "P7D" | Data that becomes stale after a week |

Components should be pure functions - given the same inputs, they should produce the same outputs. If your component reads from volatile sources, consider adding date parameters or disabling caching.

...

implementation:

graph:

tasks:

...

Fill all missing values using Pandas on CSV data:

executionOptions:

cachingStrategy:

maxCacheStaleness: P0D

Cache staleness format

Cache staleness uses RFC3339 duration format:

P30D- 30 daysP7D- 7 daysPT1H- 1 hourP0D- Disable caching

Large artifacts are purged after 30 days due to data retention policies.

What survives purging

After purging, you can still see:

- File sizes

- Content hashes

- Directory structure

- Small values (metrics, parameters)

- Execution status and timing

Cache breaking strategies

When you need fresh data despite caching:

1. Cache breaker input (nonce)

The nonce is used to introduce non-determinicity to the component, ensuring that the component is re-executed even if the inputs haven't changed. Nonce can be a random string or the current time from the caller.

@component

def search_web(

query: str,

nonce=None, # type: str | None

) -> Results:

# Pass timestamp or random value to nonce parameter

...

In case of scheduled runs, pipelines may use a placeholder for incoming timestamp.

- Template with placeholder

- Pipeline with actual value

...

inputs:

- name: pipeline creation time

type: String

default: '{{CreationTime}}'

...

...

inputs:

- name: Pipeline Creation Time

type: String

default: '2025-11-07 05:30:07.837468+00:00'

...

This substituted input can be used to pass the cache breaker value to the component:

...

arguments:

nonce:

graphInput:

inputName: Pipeline Creation Time

2. Disable caching via maxCacheStaleness

For components that should never cache:

...

implementation:

graph:

tasks:

...

Fill all missing values using Pandas on CSV data:

executionOptions:

cachingStrategy:

maxCacheStaleness: P0D

Summary

TangleML's caching system provides:

- Automatic optimization - No manual cache management needed

- Intelligent reuse - Content-based hashing and running execution sharing

- Flexible control - Configure staleness and breaking strategies

- Performance gains - Dramatic reduction in execution time

By understanding and leveraging these caching capabilities, you can iterate faster, experiment more freely, and make the most of your computational resources.